Technology news, told as a power drama.

Editor’s note:

Silicon Drama is eTatos.com’s weekly series about the battle for AI, compute, chips, agents and robots. The goal is simple: Not just to report what happened, but to explain why it matters, who gains power, who loses control and where the next conflict is already forming.

This week, AI stopped looking like a product race and started looking like a power struggle for the real world. Sam Altman entered the courtroom while OpenAI pushed voice and agents deeper into work. Mira Murati attacked the old chatbot interface with real-time interaction models. Jensen Huang moved closer to the diplomatic table as chips, China and AI guardrails became geopolitical bargaining pieces. Meanwhile, humanoid robots made beds, sorted packages for more than 40 hours, prepared for German factories, transformed into mecha machines and even appeared as a robot monk. The question has shifted again: Not from chatbot to infrastructure this time, but from infrastructure to presence. AI is moving into the places where humans actually meet machines: The voice on the phone, the agent in the browser, the robot on the factory floor, the assistant in the car, the glasses on the face and the systems quietly defending or attacking the digital world.

Welcome to Silicon Drama Episode 03: The Interface War Enters the Real World.

Previously on Silicon Drama

AI already left the chatbot window.

Last week, the story moved into infrastructure: compute, chips, clouds, energy, data centers, agents, robot hands and factory floors.

This week, the drama moved closer to the human body.

Not just the machine behind AI.

The moment we meet it.

The question is no longer only:

Who builds the intelligence?

The sharper question is:

Who owns the interface when intelligence starts speaking, seeing, moving, defending, buying, coding and working beside us?

The answer came from everywhere at once: A courtroom, a voice API, Mira Murati’s real-time interaction models, agentic browsers, AI glasses, car assistants, warehouse livestreams, robot labs, German factories, China talks and cyber defense reports.

The interface war is no longer theoretical.

It has entered the real world.

Opening Cast

Sam Altman

The Operator Under Trial

Power arena: OpenAI, Voice, Codex, Courtroom

Sam Altman is still one of the central faces of the AI age, but this week his role split into two versions of the same man: The builder pushing OpenAI deeper into voice, agents and enterprise deployment, and the executive defending OpenAI’s future in court against Elon Musk.

Mira Murati

The Interaction Architect

Power arena: Thinking Machines, Real-Time AI, Human-AI Collaboration

Mira Murati returned to the AI stage with a different kind of challenge. Not bigger models. Not louder hype. A new interface logic. Thinking Machines wants AI to stop waiting for clean turns and start collaborating in real time.

Jensen Huang

The Chip King at the Diplomatic Table

Power arena: Nvidia, Chips, China, Export Controls

Jensen Huang has become more than the man who sells the weapons of the AI race. This week, Nvidia’s chips became part of geopolitical bargaining, AI guardrail talks and the unresolved tension between Washington and Beijing.

Brett Adcock

The Body Builder

Power arena: Figure, Humanoid Work, Robot Endurance

Brett Adcock turned robot demos into must-watch tech television. Figure’s humanoids did not only make a bed. They also sorted packages live on YouTube for far longer than the originally planned eight-hour test.

Dario Amodei

The Prophet Building the Agent Army

Power arena: Anthropic, Agents, Cyber, Finance, Law

Dario Amodei remains the paradox of the AI race: The man warning about AI risk while Anthropic expands into coding, law, finance, enterprise agents and cyber-sensitive workflows.

Sundar Pichai

The Ecosystem Strategist

Power arena: Google, Gemini, Browser, Android

Sundar Pichai’s Google wants the interface back. Search, Chrome, Android, devices and Gemini are no longer separate products. They are entry points into an AI ecosystem.

Wang Xingxing

The Mecha Maker

Power arena: Unitree, GD01, Robotics Spectacle

Wang Xingxing’s Unitree brought the week’s pure sci-fi moment: A massive manned mecha that looks like anime crossing into the product catalog.

Artem Sokolov

The Factory Infiltrator

Power arena: Humanoid, Schaeffler, German Factories

Artem Sokolov brings the robot story down from spectacle into industrial reality. If Figure is the demo and Unitree is the trailer, Humanoid’s deal with Schaeffler is the factory floor.

Act I: The Trial of the AI Throne

The episode opens in the courtroom.

Not because the future of AI will be decided by lawyers alone, but because every empire eventually gets dragged into a room where its origin story is challenged.

Elon Musk’s legal battle against OpenAI has all the ingredients of classic Silicon Drama: Old alliances, broken trust, a founding mission, money, control and the question of who gets to define what OpenAI was supposed to become.

Musk argues that OpenAI betrayed its original nonprofit mission. OpenAI argues that commercial structure and massive capital were necessary to compete with Google, Meta, Anthropic and the rest of the AI arms race.

And Sam Altman stands at the center of it.

It is a fight over the meaning of the throne.

Was OpenAI supposed to be a public-interest laboratory? A commercial machine? A platform company? A deployment empire? A national strategic asset?

The courtroom is where the myth gets interrogated.

The drama is not only that Musk is attacking Altman. The drama is that the AI industry has reached a point where its founders are no longer just competing through products. They are competing through memory, ownership, mission and law.

The chatbot window was only the beginning.

The real fight is over who gets to own the future that came out of it.

Act II: The Interface War

While the courtroom fought over OpenAI’s past, the product race moved into the future.

And the future sounded like a voice.

OpenAI introduced new realtime audio models for the API, including GPT-Realtime-2, GPT-Realtime-Translate and GPT-Realtime-Whisper. On the surface, this looks like a product update. But the deeper meaning is larger.

Voice is not just another input method.

Voice is the interface that makes AI feel present.

A text agent waits. A voice agent listens. A text interface feels like software. A voice interface feels like someone in the room, on the phone, in the car, inside the headset or sitting between the human and the task.

That is why voice matters.

OpenAI is not only improving speech. It is trying to place itself at the point where people stop typing and start talking to machines that can act.

But then Mira Murati entered the episode.

Thinking Machines presented interaction models that aim to make AI more continuous, more responsive and more natural across audio, video and text. The point is to change the rhythm of human-AI collaboration.

Old AI waits for the prompt.

Murati’s bet is that the next AI should know when to speak, when to stay silent, when to interrupt, when to watch and when to act.

That is an attack on the old interface.

Most Human Scene of the Week: AI Stops Waiting

(Thinking Machines announcement and more demos: https://thinkingmachines.ai/blog/interaction-models/)

The interface war does not stop there.

Microsoft is bringing Copilot deeper into Edge with browser context, multi-tab reasoning, voice, vision and journeys. Amazon is turning Alexa into a shopping assistant that can act more deeply inside the Amazon experience. Rivian is bringing AI voice assistance into cars. Meta is opening Ray-Ban Display Glasses to developers, pushing AI into a wearable interface with camera, audio, display and gesture control.

The battlefield is no longer one app.

It is the browser.

It is the car.

It is the phone.

It is the glasses.

It is the shopping cart.

It is the workday.

It is the moment where a human reaches for help, and an AI system is already waiting at the edge of the task.

This week, the interface war became real.

Act III: The Machines Clock In

Then the episode cuts to a room.

Not a courtroom. Not a boardroom. Not a server room.

A bedroom.

Two Figure F.03 humanoid robots enter the scene and begin cleaning. They pick up objects, move through the room, coordinate around each other and make a bed.

It is a strange scene because it feels both ordinary and historic.

Ordinary, because the task is domestic.

Historic, because the machine is no longer just answering questions about the world. It is touching the world.

Most Cinematic Scene of the Week: Figure robots make a bed

The clip works because it makes the AI transition visible. The model is no longer an invisible system behind a prompt. It has arms, legs, cameras, timing, hesitation, coordination and physical limits.

But Brett Adcock’s Figure did not stop there.

What started as an eight-hour public endurance test became something much bigger. Figure’s humanoid robots sorted packages for more than 40 hours of continuous operation, with a rotating cast of robots and more than 50,000 packages processed.

The important detail: This was not one robot working alone for 40 hours without pause. It was a rotating robotic operation. That matters.

But it still changes the story.

A humanoid robot demo is interesting.

A humanoid robot shift is different.

A livestreamed humanoid robot shift that keeps going past the original target becomes a public proof attempt. It turns robotics into a spectator sport. Silicon Valley did not just watch a product video. It watched a machine try to become boring.

And in automation, boring is power.

Most Operational Scene of the Week: The Robot Didn’t Clock Out (Figure Livestream)

https://www.youtube.com/watch?v=luU57hMhkak\

Of course, skepticism remains. A controlled setup is not the same as messy industrial reality. A livestream is not a deployment at scale. A robot sorting packages under defined conditions is not yet a general worker.

But the direction is clear.

The humanoid robot is no longer just walking across a stage.

It is clocking in.

Act IV: The Factory Infiltration

If Figure showed the robot as a worker on camera, Humanoid and Schaeffler showed the robot as an industrial plan.

Humanoid plans to deploy up to 2,000 humanoid robots at Schaeffler plants by 2032, with early testing in Germany. The first phase is expected to focus on sites such as Herzogenaurach and Schweinfurt, with tasks like box handling and factory-scale testing.

This is the less glamorous side of the robot race.

No cinematic bedroom.

No sci-fi mecha.

No viral livestream.

Just factories, timelines, logistics, material handling and the slow, expensive question of whether humanoid robots can actually fit into industrial work.

Industrial subplot:

Humanoids prepare for the factory floor

This is where Physical AI becomes less of a slogan and more of an operations problem.

Can humanoid robots handle repetitive factory tasks? Can they justify their cost? Can they work safely near humans? Can they adapt to real production environments? Can they move from controlled demos to actual shifts?

That is the industrial question behind the spectacle.

And behind that question is an even deeper one: How do these robots learn?

The answer is increasingly human motion data.

Startups are building datasets from human work, recording movements, routines, gestures, lifting, sorting, reaching and manipulating objects. AI learned from the internet. Robots are learning from hands.

Quiet signal:

Human Labor Becomes the Dataset

That may be one of the most important quiet shifts of the week.

The factory floor is no longer only a place where people work.

It is becoming a classroom for machines.

Act V: The Mecha Moment

Then Unitree kicked the door open with pure science fiction.

The GD01 is not a polite helper robot. It is a massive manned transformable mecha, promoted with dramatic movement, a cockpit, a heavy frame and the kind of visual language that makes the internet immediately think of Gundam, anime, exosuits and video games.

It is expensive. It is strange. It raises obvious questions about usefulness, safety, battery life and actual deployment.

But as a scene, it is unforgettable.

Most Sci-Fi Scene of the Week: The Mecha Goes Retail

The GD01 may not tell us what most robots will do tomorrow.

But it tells us something about the psychology of the robotics race.

Humanoid robotics is not only about usefulness. It is also about spectacle, cultural imagination and the power of making the future look unavoidable.

Figure made robots look like workers.

Humanoid made them look like factory assets.

Unitree made them look like science fiction escaped into the product catalog.

That matters because technology adoption is not driven by function alone.

It is also driven by desire.

And the mecha is desire with metal legs.

Act VI: The Monk in the Machine

And then, as if the week needed a stranger subplot, there was Gabi.

Gabi, a humanoid robot based on Unitree’s G1, appeared in South Korea as a robot monk at a Buddhist temple ceremony. Wearing robes, performing gestures and answering questions, Gabi became one of the most surreal robotics scenes of the week.

Most Surreal Scene of the Week: The Android Monk

This is not the main story of humanoid robotics.

But it is a perfect Silicon Drama side episode.

While one Unitree machine smashed blocks like anime, another folded its hands in prayer.

The question behind Gabi is not whether a robot can truly understand spirituality. The question is what humans project onto machines once they begin to look, move and respond like social beings.

A warehouse robot is labor.

A mecha is fantasy.

A robot monk is symbolism.

And this week, humanoid robotics gave us all three.

Act VII: The AI Barons Go to Beijing

While robots learned to work, the chip war moved toward diplomacy.

Jensen Huang’s role this week became bigger than Nvidia’s product roadmap. Nvidia’s H200 chips became part of the US-China power equation, with reports that some Chinese companies were cleared to buy them, while actual deliveries remained politically and strategically complicated.

At the same time, the US and China discussed AI guardrails for powerful models, including concerns about misuse by non-state actors, cyber risks and the need for some kind of communication channel around frontier AI.

This is where Silicon Drama becomes geopolitical.

The chip is no longer just hardware.

It is leverage.

The model is no longer just software.

It is a security concern.

The CEO is no longer just a businessman.

He is a figure in the room where market access, export controls and strategic trust collide.

Political power move:

The AI Barons Go to Beijing may not have the visual drama of a robot making a bed or a mecha smashing blocks. But it may be one of the most important scenes of the episode.

Because the AI race is no longer contained inside Silicon Valley.

It is now negotiated between capitals.

And the unresolved part is the most dramatic part.

The chips may be approved.

But approval is not delivery.

And delivery is not trust.

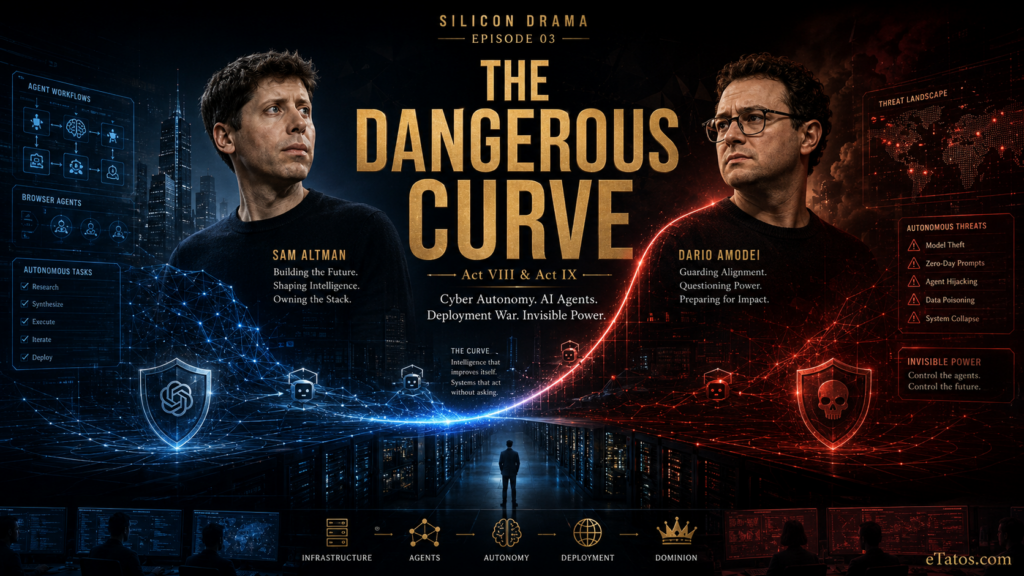

Act VIII: The Dangerous Curve

Every good power drama has a dark room.

This week, that room was cyber.

The UK AI Security Institute reported that the time horizon for autonomous cyber tasks in its narrow test environment appears to be doubling in months, not years. The exact number should be treated carefully. It does not mean AI can suddenly do every complex task for twice as long. It refers to a specific cyber benchmark environment, with limits and uncertainty.

But the signal is still important.

AI systems are getting better at staying on task.

They are chaining more steps.

They are working through longer sequences.

They are becoming more persistent.

Dangerous curve:

The Autonomy Clock

AISI analysis: https://www.aisi.gov.uk/blog/how-fast-is-autonomous-ai-cyber-capability-advancing

Then Google Threat Intelligence added the darker evidence.

Google reported that threat actors are already using AI for vulnerability discovery, exploit development, polymorphic malware, supply-chain attacks and autonomous attack workflows. One of the most dramatic examples was PROMPTSPY, an AI-assisted Android backdoor where a language model interprets the system state and generates actions like clicks or swipes.

That is the shadow version of the agent revolution.

If AI can navigate interfaces for productivity, it can also navigate interfaces for attack.

If AI can help developers, it can help exploiters.

If AI can act, it can be misused as an actor.

Most Dangerous Scene: The Malware Learned to Navigate

Google Threat Intelligence report: https://cloud.google.com/blog/topics/threat-intelligence/ai-vulnerability-exploitation-initial-access

OpenAI’s Daybreak initiative appears on the other side of the same battlefield: AI as cyber defense, vulnerability discovery, patch validation and security support.

But that is the tension.

The same type of capability can strengthen defenders and attackers.

The same autonomy that makes agents useful makes them dangerous.

The curve does not ask whether AI is good or evil.

It asks how long it can keep going.

Act IX: The Agent Deployment War

Our episode then moves from danger back into work.

OpenAI launched its Deployment Company, built around the idea that frontier AI needs more than an API. It needs teams that can push AI into real enterprises, workflows and operations.

That is an important shift.

Model companies are becoming deployment companies.

Anthropic is also moving aggressively. Claude is expanding across coding, legal workflows, finance, multi-agent systems and enterprise tooling. Dario Amodei’s Anthropic keeps the language of safety, but the company is also building the infrastructure of everyday AI work.

Notion is opening its workspace to external agents such as Claude Code, Codex, Cursor and Decagon, positioning itself as a home base for human teams and AI agents.

Amazon is putting more agentic shopping into Alexa.

Microsoft is making the browser more agentic.

OpenAI is bringing Codex to mobile, making it easier to monitor and manage coding tasks from a phone.

Practical power move:

Agents Enter the Workflow

This is less cinematic than the robots.

But it may be more economically important.

Most people will not meet a humanoid robot this year.

Many will meet an agent inside a browser, a work app, a shopping flow, a customer service call, a code editor or a phone.

That is why the interface war matters.

AI does not need to become a robot to enter the real world.

Sometimes it only needs to enter the tab you already have open.

Act X: The Pigeon Button Short

And then, somewhere above all the billion-dollar deals, diplomatic talks and robot shifts, three AI-generated pigeons stood on a New York rooftop and argued about a red button.

The short AI video by Marko Slavnic became one of the week’s funniest cinematic signals. It is absurd, polished, strange and exactly the kind of thing that shows where AI video is heading.

Not just demos.

Not just technical showcases.

Stories.

Little scenes.

Characters.

Comedic timing.

A creator with an idea and enough tool skill can now produce a tiny piece of entertainment that feels like it escaped from a streaming platform’s experimental short section.

Funniest Cinematic Scene of the Week: The Pigeon Button Short

This is the lighter side of the same revolution.

While Big Tech fights over chips and agents, small creators are discovering that cinematic production is becoming modular, generative and weirdly accessible.

The future may be built in data centers.

But it may also be tested on rooftops by three pigeons and a red button.

This Week’s Power Board

What This Week Really Means

This was the week the AI story moved from capability to contact.

Not just what the model can do.

Where it appears.

How it reaches us.

And who gets to stand between the human and the machine.

That is the real interface war.

A voice model is not only a better way to talk to software. It is a claim on the phone call, the customer-service line, the car ride, the meeting and the moment when a person asks for help without opening a laptop.

A browser agent is not only a productivity feature. It is a claim on the web itself, on the tab, the form, the search, the purchase and the workflow.

A humanoid robot is not only hardware. It is a claim on physical labor, on the warehouse aisle, the factory floor, the hotel room, the airport ramp and eventually the home.

A chip is not only a component. It is a claim on national leverage, industrial capacity and who gets enough compute to stay in the race.

And a cyber agent is not only a security tool. It is a claim on persistence, autonomy and the ability to act inside digital systems before humans can fully follow.

That is why this episode matters.

The AI race is no longer only about building intelligence.

It is about placing intelligence at every point of contact between people, machines, companies and states.

The winner may not be the company with the smartest answer.

The winner may be the one that controls the moment before the answer is even asked for.

The voice before the call ends.

The agent before the browser task begins.

The robot before the shift starts.

The chip before the model trains.

The guardrail before the system is misused.

AI is becoming useful, visible, physical, political and dangerous at the same time.

And once intelligence enters every interface, the real question is no longer whether AI will change the world.

It is who will own the doorways.

The Doorway Is Open

The chips were approved, but not fully delivered.

The robots clocked in, but the skeptics kept watching.

The voice agents got smoother, but the interface war only got sharper.

The cyber curve kept rising, but the guardrails are still being negotiated.

And somewhere between the courtroom, the factory, the mecha, the monk and the pigeons, one thing became clear:

AI is not just becoming smarter.

It is becoming harder to keep in one place.

This was Silicon Drama Episode 03.

The Interface War has entered the real world.

And next week, the question becomes even sharper: Who can still control it once every doorway starts opening at once?

If you want to follow the next episodes of Silicon Drama, subscribe to eTatos.com or our newsletter. The next power struggle is already forming.